Clusters

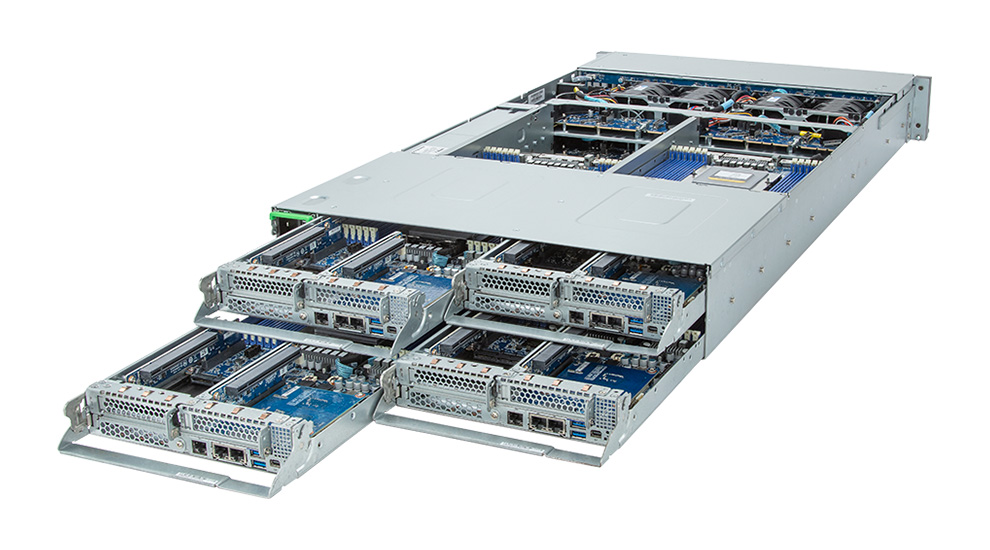

Navion™ Clusters with AMD EPYC CPUs

Navion clusters integrate the latest 4th Gen AMD EPYC “Genoa” CPUs. They scale from small departmental clusters to large-scale, shared HPC resources.

What Makes a Microway Cluster Unique?

Comprehensive Burn-In Testing by Microway

Each piece of hardware (CPUs, memory, GPUs, more) is fully tested for up to 72 hours with test suites that have been known to elicit faults. Our team filters out early component mortality so you can be running on day 1 after delivery.

Full Software Integration by Linux, HPC, & AI Experts

Systems Integrators update all firmwares and resolve all driver conflicts. Then they load your OS of choice, compilers of choice, MPI flavor, cluster management software, and scheduler

Cluster Validation

Only after these first steps pass our exacting standard of quality control, our team validates the performance of every cluster by running real MPI jobs on it. They then validate the fabric/network and storage for performance.

Architected by Actual HPC & AI Sales Engineers

Every Microway cluster is architected from the ground up for maximum performance on your application. Our team of Sales Engineers has the expertise to design for maximum performance.