PLATFORMS

NVIDIA GPU Solutions from Microway

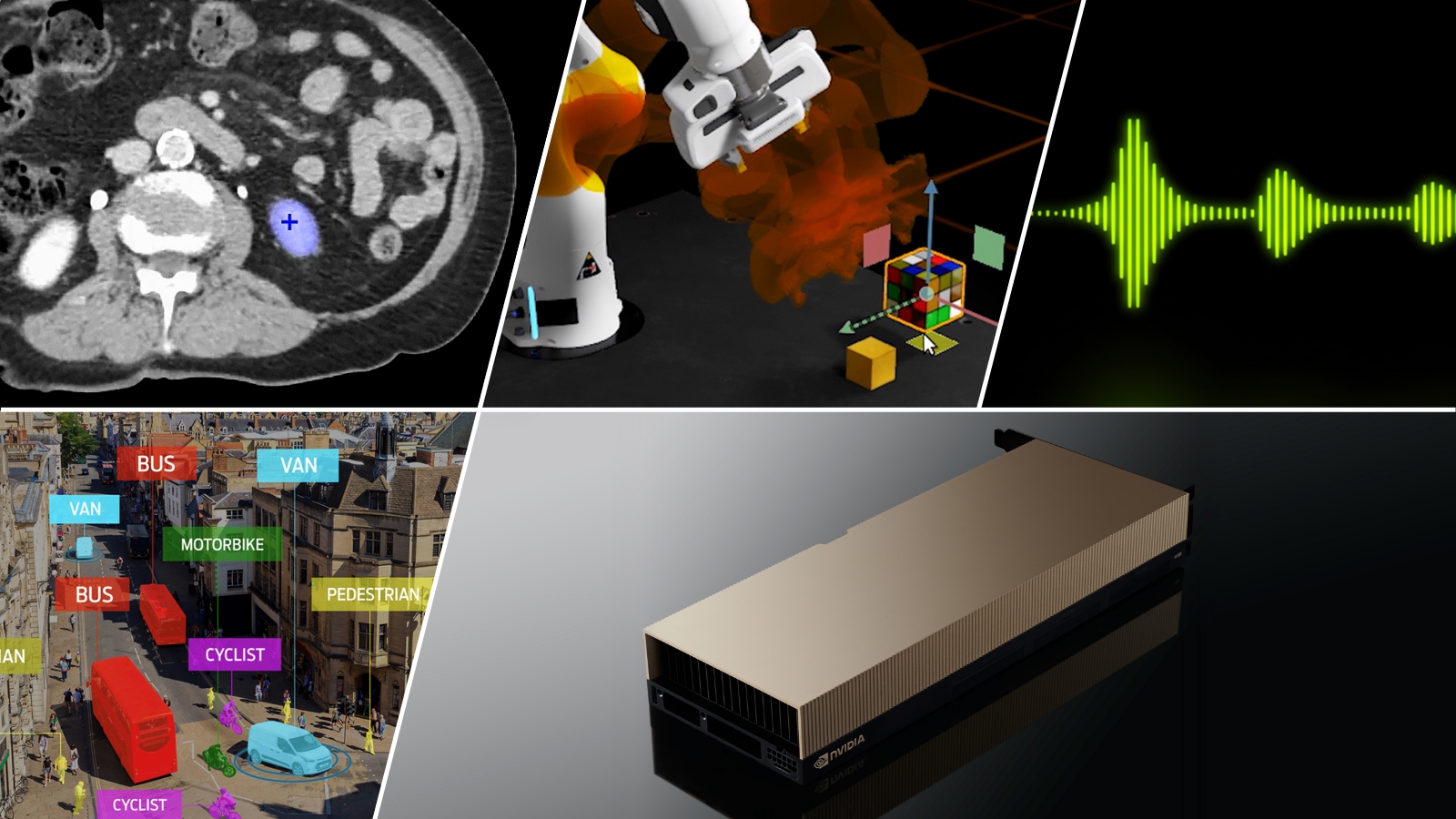

NVIDIA Datacenter GPUs are the leading acceleration platform for HPC and AI. They offer overwhelming speedups on thousands of applications and are the computational engine for nearly every accelerated computing workload. As an NVIDIA NPN Elite partner, Microway can custom-architect a bleeding-edge NVIDIA GPU solution tailored to your application or code.

WhisperStation – RTX Professional

Ultra-Quiet workstation with NVIDIA RTX Professional series GPUs for visualization, simulation, and AI

NVIDIA GPU Servers

GPU Servers with up to 10 NVIDIA Datacenter GPUs

GPU Clusters

Custom AI & HPC clusters from 5-500 GPU nodes

NVIDIA DGX

Leadership AI deployments built upon the NVIDIA DGX™ Platform

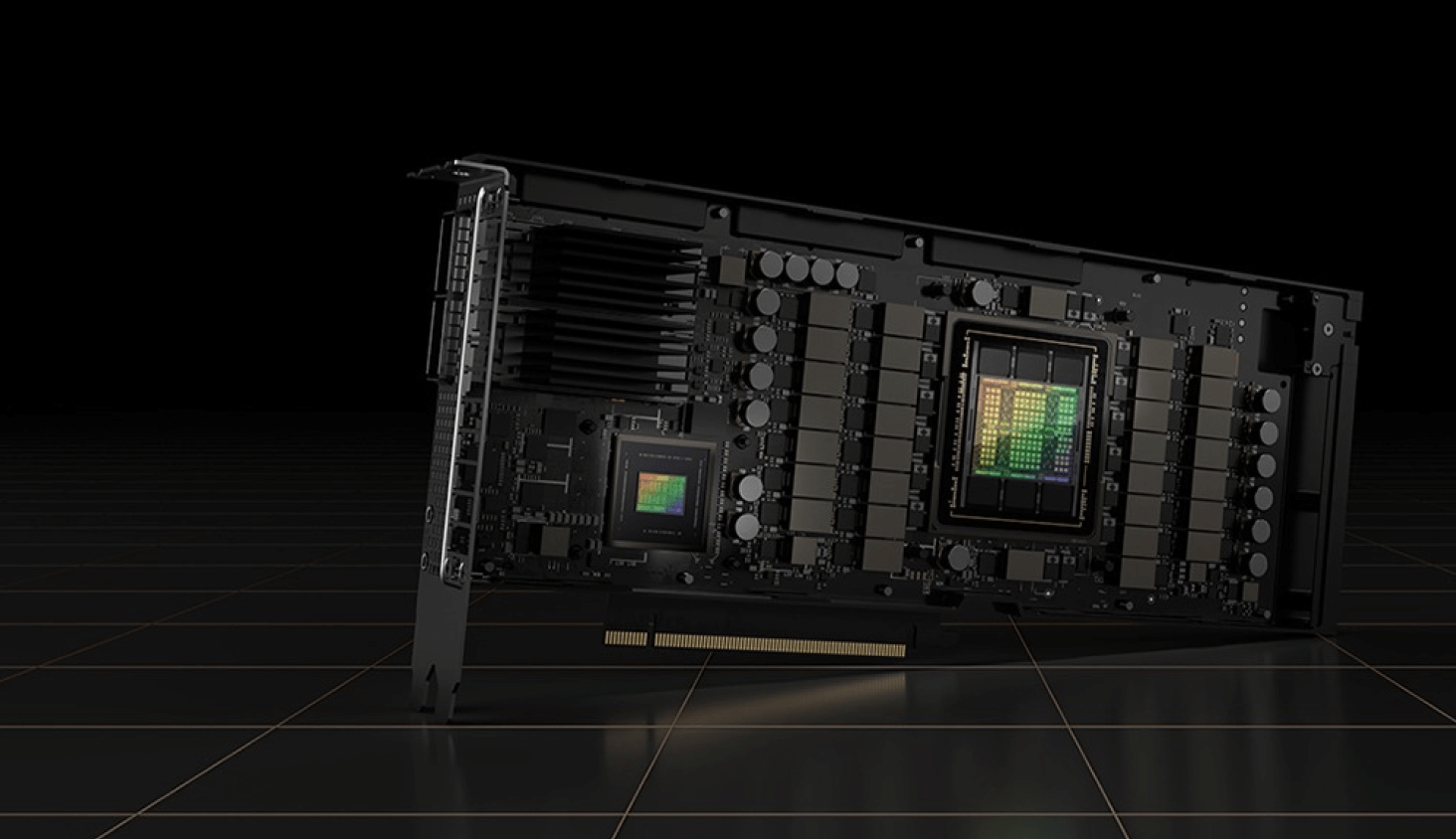

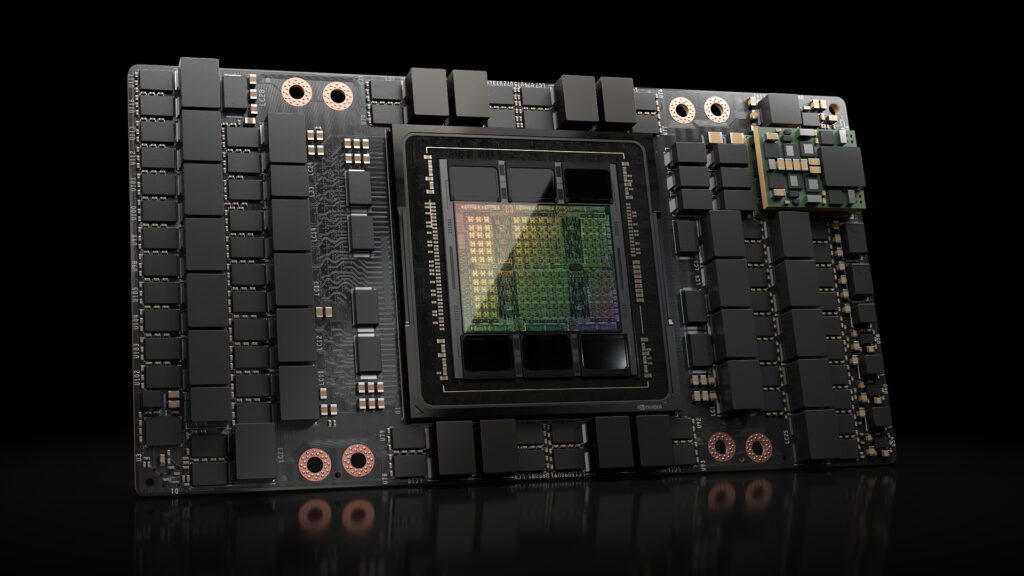

NVIDIA H200 & NVIDIA H200 NVL GPUs

The NVIDIA H200 and NVIDIA H200 NVL Tensor Core GPUs are supercharging AI and HPC workloads with:

- Higher Performance With Larger, Faster Memory

191GB of HBM3e operating at up to 4.8TB/s, 1.5X the capacity and 1.4X the memory bandwidth of NVIDIA H100 - Up to 1.3X the HPC performance

Increased performance of NVIDIA H100 from gains in memory capacity and bandwidth for supercharged high performance computing - Increased performance for AI Training and Inference over NVIDIA H100/H100 NVL

1.7X-1.9 the LLM inference performance of NVIDIA H100 NVL/H100 and improved training performance. Up to 5X the training performance of NVIDIA A100 on LLMs - All the existing features of NVIDIA H100 and the NVIDIA Hopper™ architecture

NVIDIA L40S GPUs

NVIDIA L40S GPUs are outstanding general purpose GPU accelerators for a variety of AI & Graphics workloads

- Leading Graphics and FP32 performance

Up to 91.6 TFLOPS FP32 single-precision floating-point performance - Excellent Deep Learning throughput

362.05 TFLOPS of FP16 Tensor Core (733 TFLOPS with sparsity) AI performance; 733 TOPS Int8 performance (1,466 TOPS with Sparsity) - Large, Fast GPU memory

1.2-1.7X the AI training performance of NVIDIA A100

Why NVIDIA Datacenter GPUs?

Full NVIDIA NVLink Capability, Up to 900GB/sec

Only NVIDIA Datacenter GPUs deploy the most robust implementations of NVIDIA NVLink for the highest bandwidth data transfers. At up to 900GB/sec per GPU, your data moves freely throughout the system and nearly 7X the rate of PCI-E x16 5.0 GPUs.

Unique Instructions for AI Training, AI Inference, & HPC

Datacenter GPUs support the latest TF32, BFLOAT16, FP64 Tensor Core, Int8, and FP8 instructions that dramatically improve application performance.

Unmatched Memory Capacity, up to 191GB per GPU

Support your largest datasets with up to 191GB of GPU memory, far greater capacity than available on consumer offerings.

Full GPU Direct Capability

Only datacenter GPUs support the complete array of GPU Direct P2P, RDMA, and Storage features. These critical functions remove unnecessary copies and dramatically improve data flow.

Explosive Memory Bandwidth up to 4.8TB/s and ECC

NVIDIA Datacenter GPUs uniquely feature HBM3 and HBM3e GPU memory with up to 4.8TB/sec of bandwidth and full ECC protection.

Superior Monitoring & Management

Full GPU integration with the host system’s monitoring and management capabilities such as IPMI. Administrators can manage datacenter GPUs with their widely-used cluster/grid management tools.

Get a Free NVIDIA AI Enterprise License

Every NVIDIA H100 and H200 PCI-Express GPU comes with a free license for NVIDIA AI Enterprise software tools.

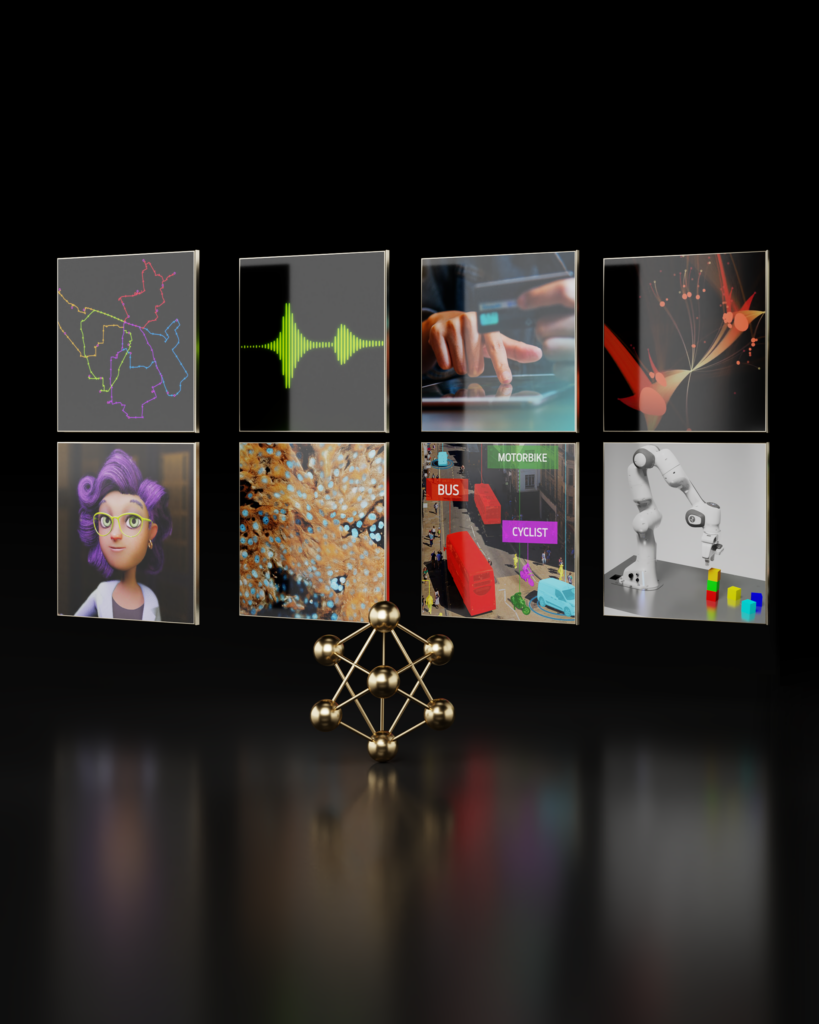

NVIDIA AI Enterprise simplifies the building of an AI-ready solution, accelerating AI development and deployment of production-ready generative AI, computer vision, speech AI, and more.

NVIDIA Ebook: HPC for the Age of AI & Cloud

Read how HPC is evolving in the age of AI and Cloud Computing and the NVIDIA framework for HPC