Bundled Services

DGX H100 deliveries are bundled with Microway services including:

DGX Site Planning

A Microway Solutions Architect will provide remote consultation to you in planning for the DGX H100’s unique power and cooling requirements. This includes rack diagramming with airflow and power cabling notation as well as answering queries from facilities staff about support requirements of the new DGX H100 hardware.

Deployment Services

All DGX OS and container software will be installed, firmware upgraded to the latest versions, desired DGX-containers installed, and deep learning test jobs run. Customers may supply questions to our experts. In some cases, factory-trained Microway experts may travel to your datacenter.

Optional Services

Microway also offers optional DGX services including: container and/or job execution script creation, and partner-provided Deep Learning data preparation consultancy.

Container or Job Execution Script Creation

Creating an effective workflow is key to your success with any hardware resource. The container-based architecture of DGX systems mean proper container management and even job execution scripts are a necessity. Microway experts will assist you in creating: your default containers, scripts to orchestrate these containers for multiple users in your organization, and methods of dynamically allocating GPUs as required to containers. Experts will also help you plan profiles for the Multi Instance GPU features to more effectively share your resource across your organization.

Deep Learning Data Preparation

An overwhelming majority of the time in a deep learning project is spent on the preparation of data. At your option, Microway’s data-science consultant partners will engage with you to: to create a custom scope of work, determine the best means to prepare your data for deep learning, create the pre-processing algorithms, assist in the pre-process of the training data, and optionally determine effective means of measurement for the overall DL project. Additional services also available.

Complementary Options

- Separate direct-attached high-speed flash data plane for smaller data sets

- DDN Parallel storage solutions for large datasets (up to multi-petabyte), scale-out user-bases, or ultra-high bandwidth requirements

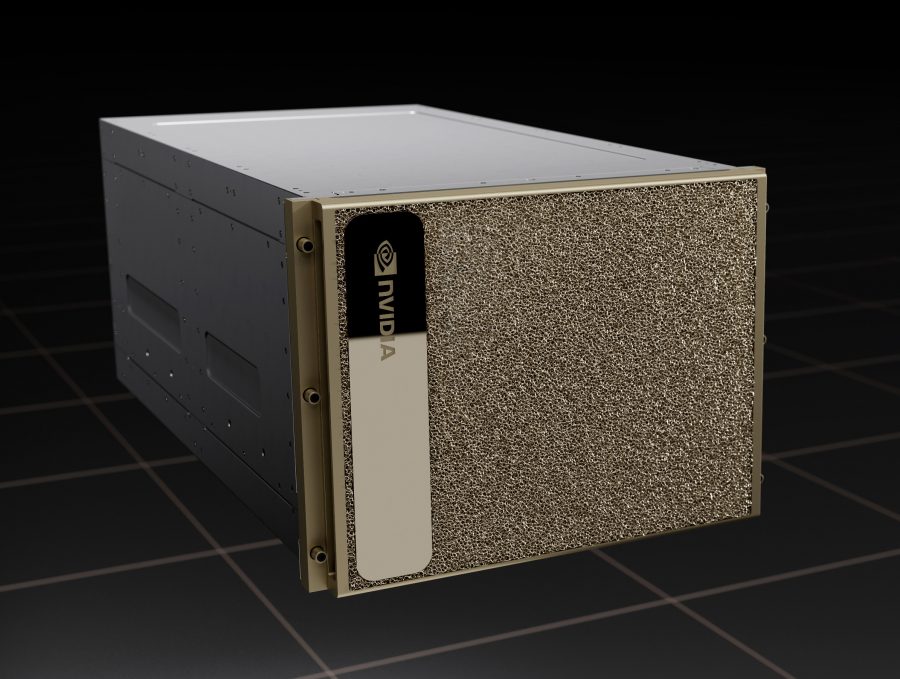

NVIDIA DGX H100 Part Numbers

DGXH-G640F+P2CMI36 – NVIDIA DGX H100 System for Commercial or Government institutions with 3 year support

DGXH-G640F+P2CMI48 –NVIDIA DGX H100 System for Commercial or Government institutions with 4 year support

DGXH-G640F+P2CMI60 –NVIDIA DGX H100 System for Commercial or Government institutions with 5 year support

DGXH-G640F+P2EDI36 – NVIDIA DGX H100 System for EDU (Educational institutions) with 3 year support

DGXH-G640F+P2EDI48 – NVIDIA DGX H100 System for EDU (Educational institutions) with 4 year support

DGXH-G640F+P2EDI60 – NVIDIA DGX H100 System for EDU (Educational institutions) with 5 year support

All NVIDIA DGX Systems are sold and delivered with a Standard DGX Hardware Warranty and a minimum 3 years of DGX Support services. These Support services can be renewed annually.

Continuing to renew your Support services ensures you receive the latest DGX software updates (including frameworks) and retain the DGX H100 system’s outstanding SLA commitments.

Contact us for pricing

Final pricing depends upon configuration and any applicable discounts, including education or NVIDIA Inception. Request a custom quotation to receive your applicable discounts. <br><br>NVIDIA requires all DGX purchases to include a support services contract. Ensure all quotes you receive include this mandatory DGX support.