This article provides in-depth discussion and analysis of the 7nm AMD EPYC processor (codenamed “Rome” and based on AMD’s Zen2 architecture). EPYC “Rome” processors replace the previous “Naples” processors and are available for sale as of August 7th, 2019. We also have provided an AMD EPYC “Rome” CPU Review that you may wish to review. Note: these have since been superseded by the “Milan” AMD EPYC CPUs.

These new CPUs are the second iteration of AMD’s EPYC server processor family. They remain compatible with the existing workstation and server platforms, but feature significant feature and performance improvements. Some of the new features (e.g., PCI-E 4.0) will require updated/revised platforms. If you’re looking to upgrade to or deploy these new CPUs, please speak with one of our experts to learn more.

Important features/changes in EPYC “Rome” CPUs include:

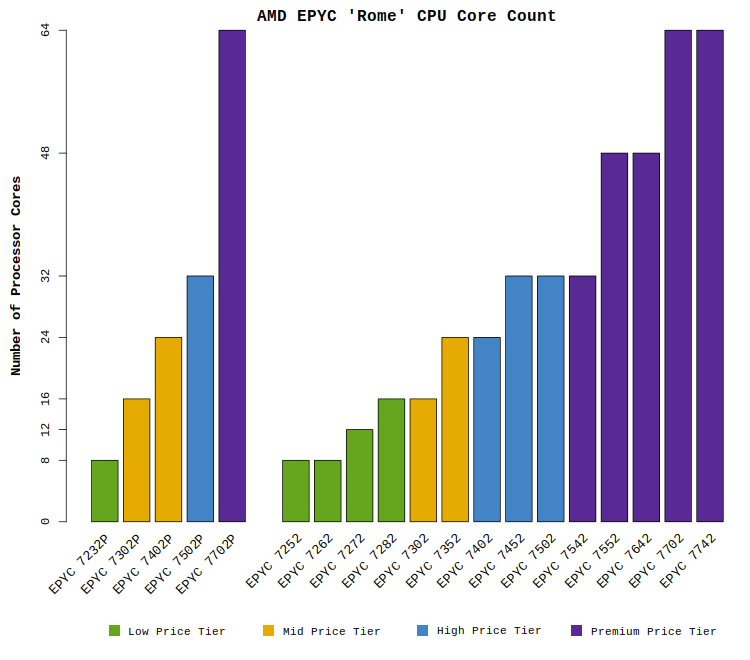

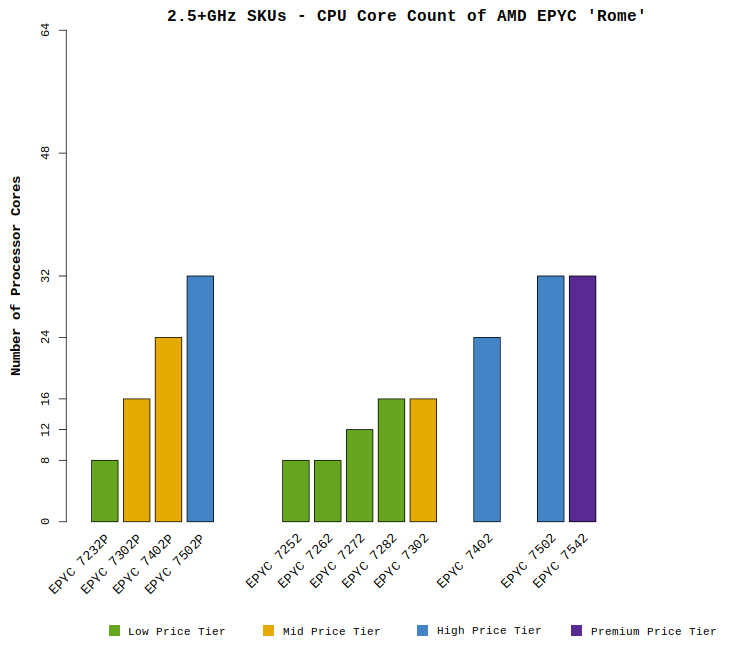

- Up to 64 processor cores per socket (with options for 8-, 12-, 16-, 24-, 32-, and 48-cores)

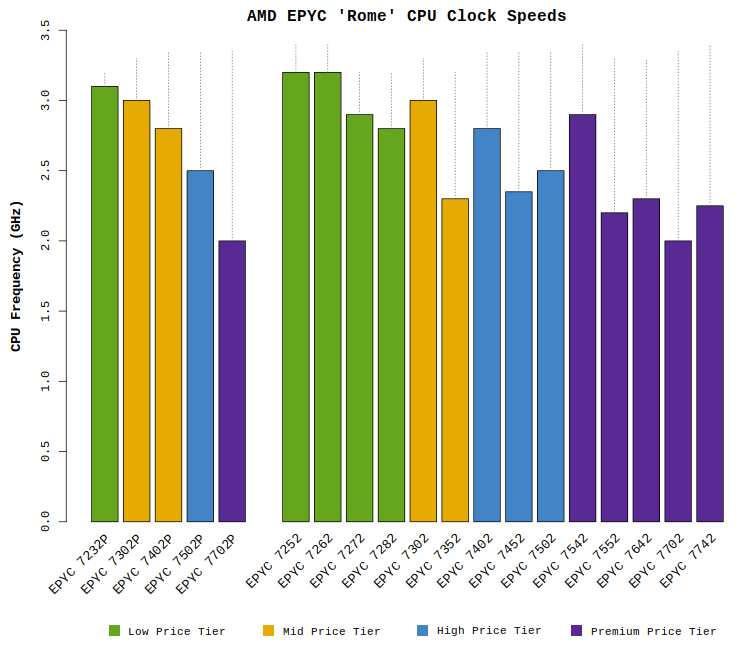

- Improved CPU clock speeds up to 3.1GHz (with Boost speeds up to 3.4GHz)

- Increased computational performance:

- Full support for 256-bit AVX2 instructions with two 256-bit FMA units per CPU core

The previous “Naples” architecture split 256-bit instructions into two separate 128-bit operations - Up to 16 double-precision FLOPS per cycle per core

- Double-precision floating point multiplies complete in 3 cycles (down from 4)

- 15% increase in instructions completed per clock cycle (IPC) for integer operations

- Full support for 256-bit AVX2 instructions with two 256-bit FMA units per CPU core

- Memory capacity & performance features:

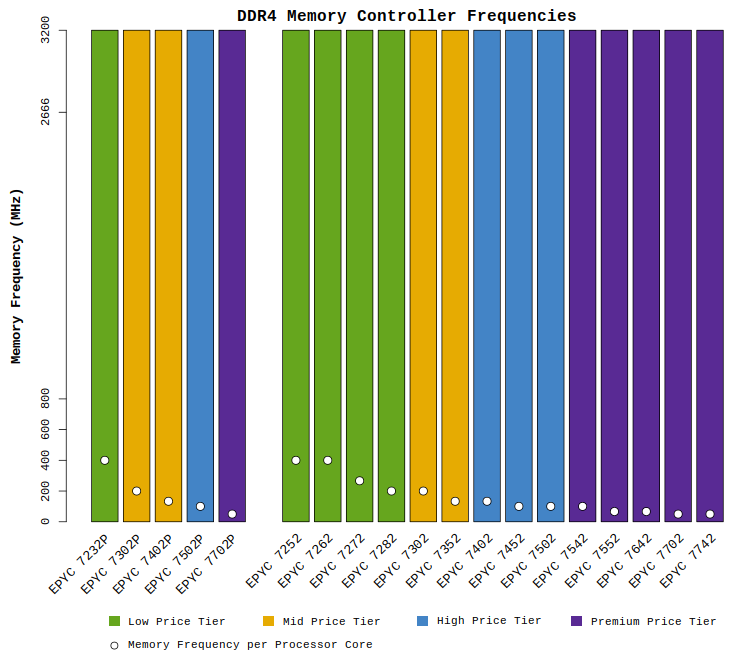

- Eight-channel memory controller on each CPU

- Support for DDR4 memory speeds up to 3200MHz (up from 2666MHz)

- Up to 4TB memory per CPU socket

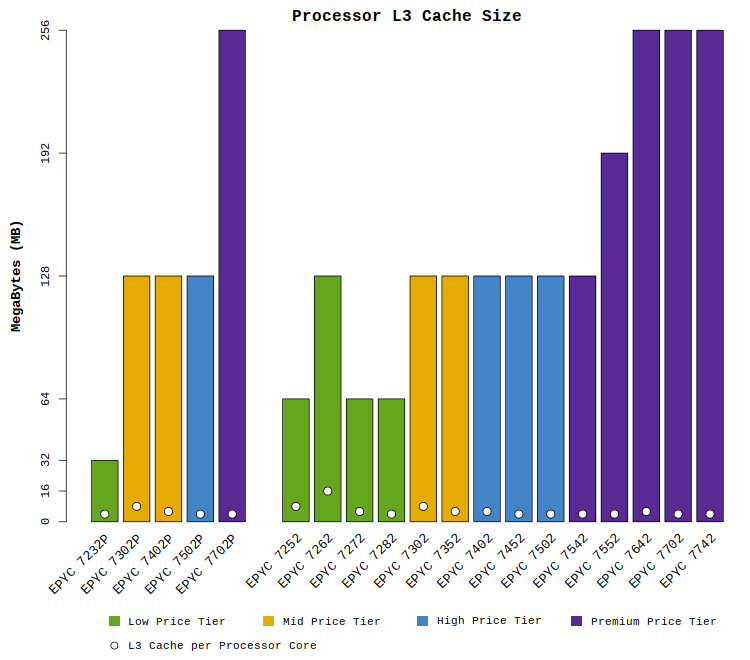

- Up to 256MB L3 cache per CPU (up from 64MB)

- Support for PCI-Express generation 4.0 (which doubles the throughput of gen 3.0)

- Up to 128 lanes of PCI-Express per CPU socket

- Improvements to NUMA architecture:

- Simplified design with one NUMA domain per CPU Socket

- Uniform latencies between CPU dies (plus fewer hops between cores)

- Improved InfinityFabric performance (read speed per clock is doubled to 32 bytes)

- Integrated in-silicon security mitigations for Spectre

With a product this complex, it’s very difficult to cover every aspect of the design. Here, we concentrate primarily on the performance of the processors for HPC & AI applications.

Before diving into the details, it helps to keep in mind the following recommendations. Based on our experience with HPC and Deep Learning deployments, our guidance for selecting among the EPYC options is as follows:

- 8-core EPYC CPUs – not recommended for HPC

While available for a low price, these models are not as cost-effective as many of the higher core count models. - 12-, 16-, and 24-core EPYC CPUs – suitable for most HPC workloads

While not typically offering the best cost-effectiveness, they provide excellent performance at lower price points. - 32-core EPYC CPUs – excellent for HPC workloads

These models offer excellent price/performance along with relatively high clock speeds and core counts - 48-core and 64-core EPYC CPUs – suitable for certain HPC workloads

Although the highest core count models appear to provide the best cost-effectiveness and power efficiency, many applications exhibit diminishing returns at the highest core counts. For scalable applications that are not memory bandwidth bound, these EPYC CPUs will be excellent choices.

Microway operates a Test Drive cluster to assist in evaluating and comparing these options as users develop the specifications for their new HPC & AI deployments. We would be happy to help you evaluate AMD EPYC processors as you plan your purchase.

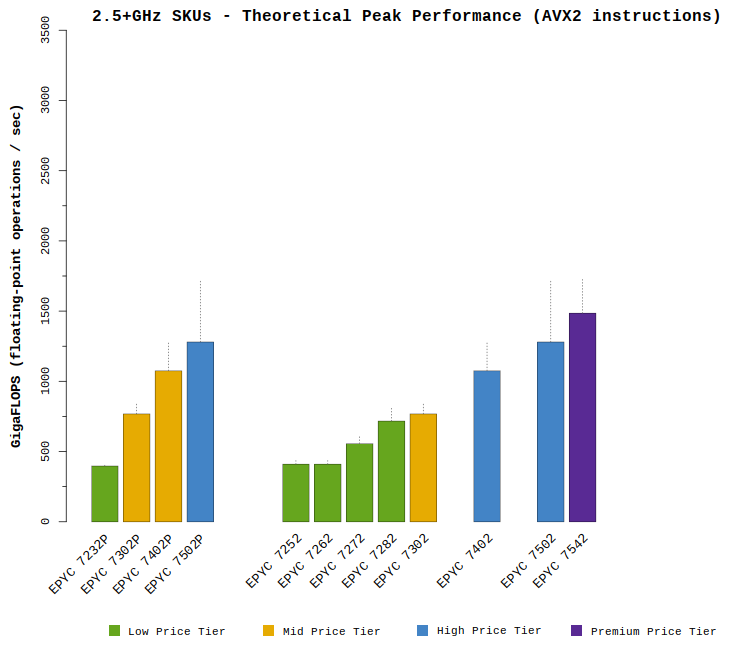

Unprecedented Computational Performance

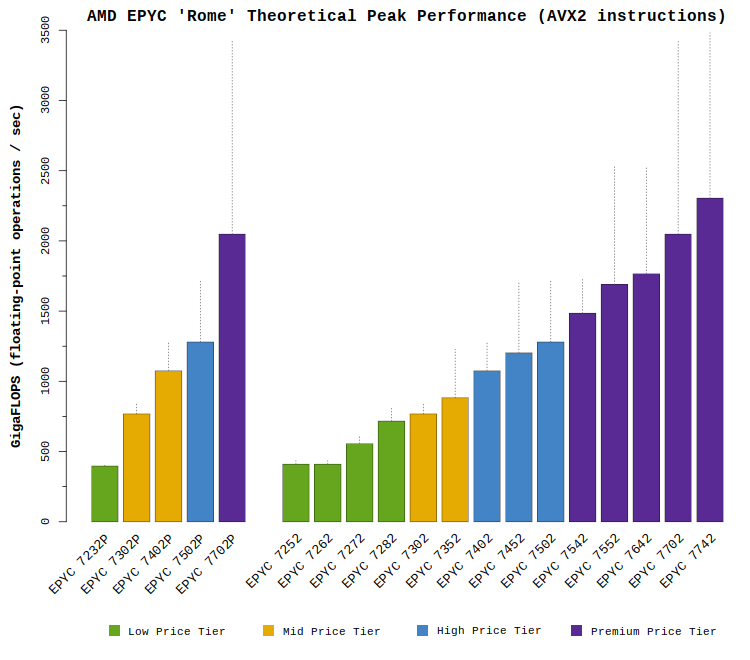

The EPYC “Rome” processors deliver new capabilities and exceptional performance. Many models provide over 1 TFLOPS (one teraflop of double-precision 64-bit performance per second) and several models provide 2 TFLOPS. This performance is achieved by doubling the computational power of each core and doubling the number of cores. The plot below shows the performance range across this new CPU line-up:

As shown above, the shaded/colored bars indicate the expected performance ranges for each CPU model. These peak performance numbers are achieved when executing 256-bit AVX2 instructions with FMA. Note that only a small set of codes issue almost exclusively AVX2 FMA instructions (e.g., LINPACK). Most applications issue a variety of instructions and will achieve lower than the peak FLOPS values shown above. Applications which have not been re-compiled with an appropriate compiler would not include AVX2 instructions and would thus achieve lower performance.

The dotted lines indicate the possible peak performance if all cores are operating at boosted clock speeds. While theoretically possible for short bursts, sustained performance at these levels is not expected. Sections of code with dense, vectorized instructions are very demanding, and typically result in the processor core slightly lowering its clock speed (this behavior is not unique to AMD CPUs). While AMD has not published specific clock speed expectations for such codes, Microway expects the EPYC “Rome” CPUs to operate near their “base” clock speed values even when executing code with intensive instructions.

The CPU models above are sorted by price (as discussed in the next section). The lowest-performance models provide fewer numbers of CPU cores, less cache, and slower memory speeds. Higher-end models offer high core counts for the best performance. HPC and AI groups are generally expected to favor the mid-range processor models, as the highest core count CPUs are priced at a premium.

Note that those models which only support single-CPU installations are separated on the left side of each plot.

AMD EPYC “Rome” Price Ranges

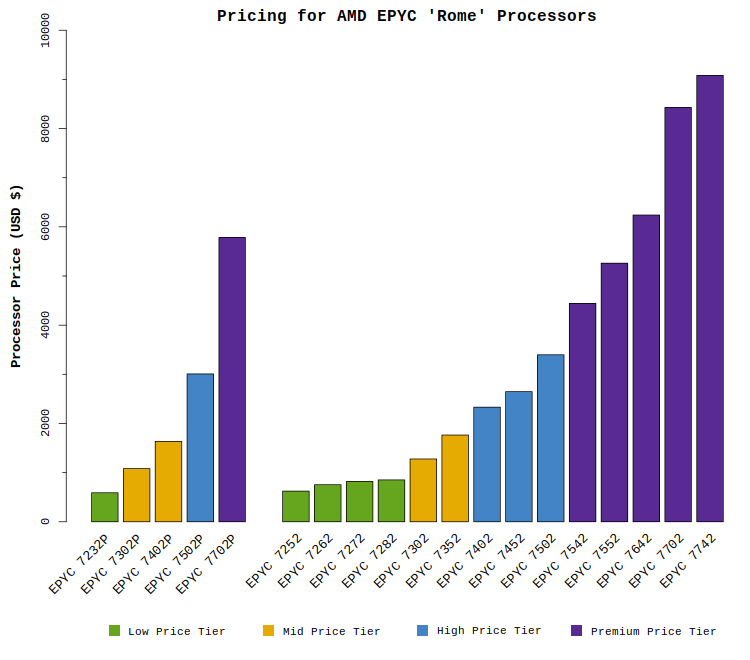

The new EPYC “Rome” processors span a fairly wide range of prices, so budget must be considered when selecting a CPU. While the entry-level models are under $1,000, the highest-end EPYC processors cost nearly $10,000 each. It would be frustrating to plan for 64-core processors when the budget cannot support the price. The plot below compares the prices of the EPYC “Rome” processors:

All the CPUs in this article are sorted by price (as shown in the plot above). To ease comparisons, all of the plots in this article are ordered to match the above plot. Keep this pricing in mind as you review this article and plan your system architecture. The color of each bar indicates the expected customer price per CPU:

- Low price tier: prices below $1,000 per CPU

- Mid price tier: prices between $1,000 and $2,000

- High price tier: prices between $2,000 and $4,000

- Premium price tier: prices above $4,000 per EPYC CPU

Most HPC users are expected to select CPU models around the high price tier. These models provide industry-leading performance (and excellent performance per dollar) for a price under $4,000 per processor. Applications can certainly leverage the premium EPYC processor models, but they will come at a higher price.

AMD “Rome” EPYC Processor Specifications

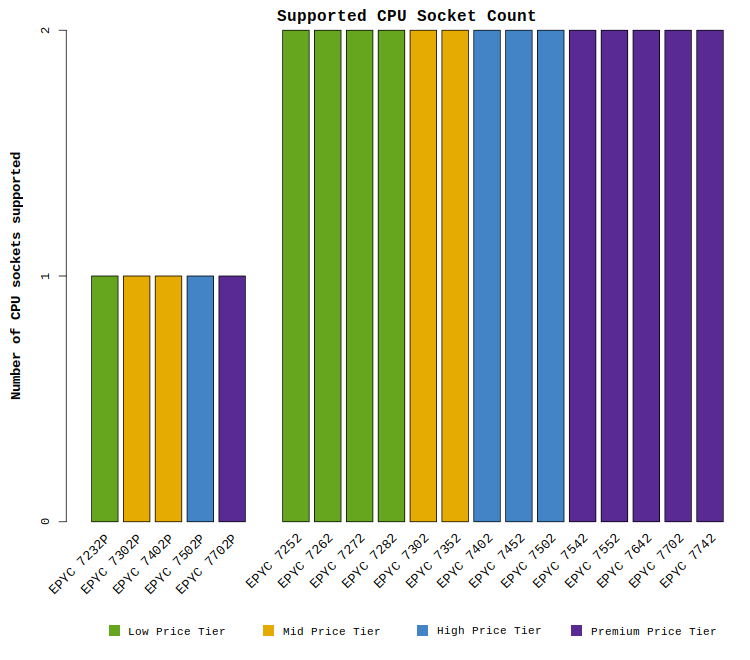

The set of tabs below compares the features and specifications of this new EPYC processor family. Take note that certain CPU SKUs are designed for single-socket systems (indicated with a P suffix on the part number). All other models may be used in either a single- or dual-socket system. The P-series AMD EPYC CPUs have a lower price and are thus the most cost-effective models, but remember that they are not available in dual-CPU systems.

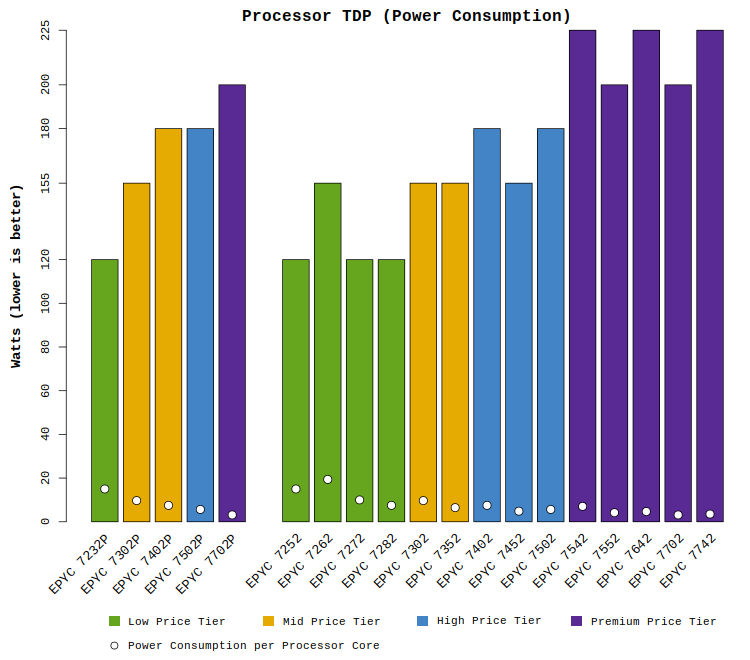

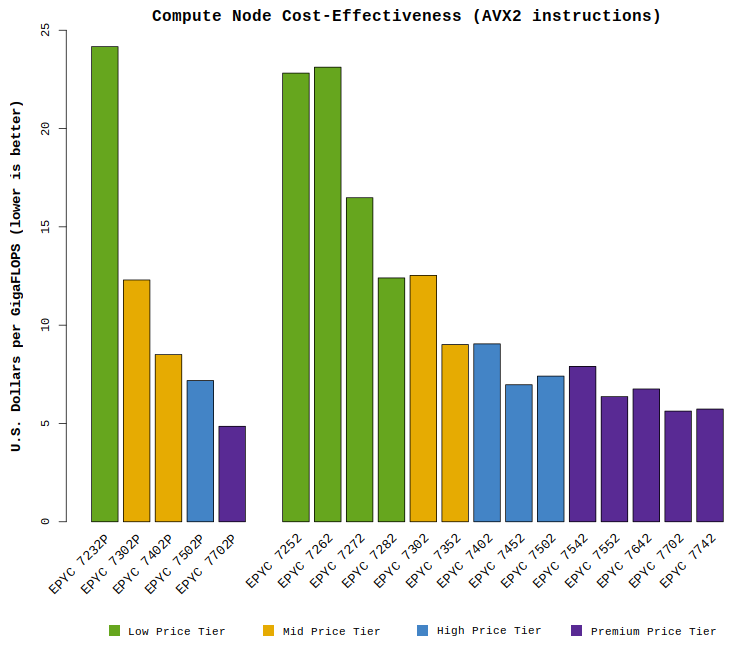

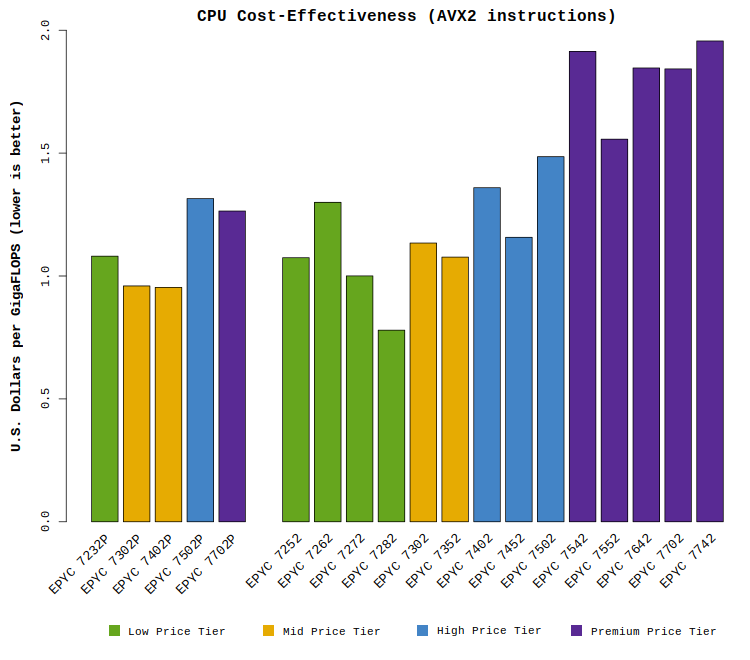

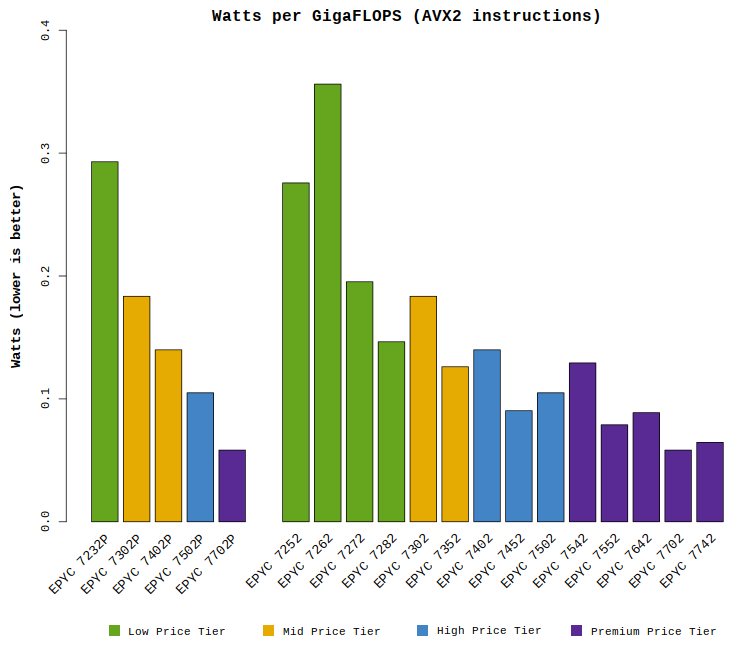

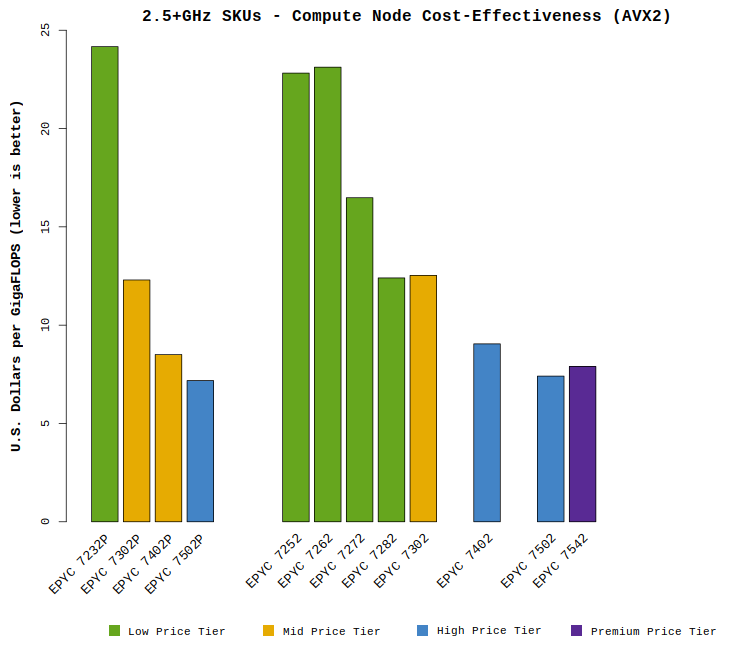

Cost-Effectiveness and Power Efficiency of EPYC “Rome” CPUs

Overall, the AMD EPYC processors provide great value in price spent versus performance achieved. However, there is a spectrum of efficiency, with certain CPU models offering particularly compelling value. Also remember that the prices and power requirements for some of the top models are fairly high. Savvy readers may find the following facts useful:

- The most cost-effective CPUs likely to be selected are EPYC 7452 and EPYC 7552

- If a balance of cost-effectiveness and higher clock speed are needed, look to EPYC 7502

- While the EPYC 7702 looks to be the most cost-effective on paper, it is important to consider that many applications may not be able to scale efficiently to 64 cores. Benchmark before making the selection.

- Applications which can be satisfied by a single CPU will benefit greatly from the single-socket EPYC 7xx2P models

The plots below compare the cost-effectiveness and power efficiency of these CPU models. The intent is to go beyond the raw “speeds and feeds” of the processors to determine which models will be most attractive for HPC and Deep Learning/AI deployments.

Recommended CPU Models for HPC & AI/Deep Learning

Although most of the EPYC CPUs will offer excellent performance, it is common for computationally-demanding sites to set a floor on CPU clock speeds (usually around 2.5GHz). The intent is to ensure that no workload suffers too low of a performance, as not all are well parallelized. While there are users who would prefer higher clock speeds, experience shows that most groups settle on a minimum clock speed around ~2.5GHz. With that in mind, the comparisons below highlight only those CPU models which offer 2.5+GHz performance.