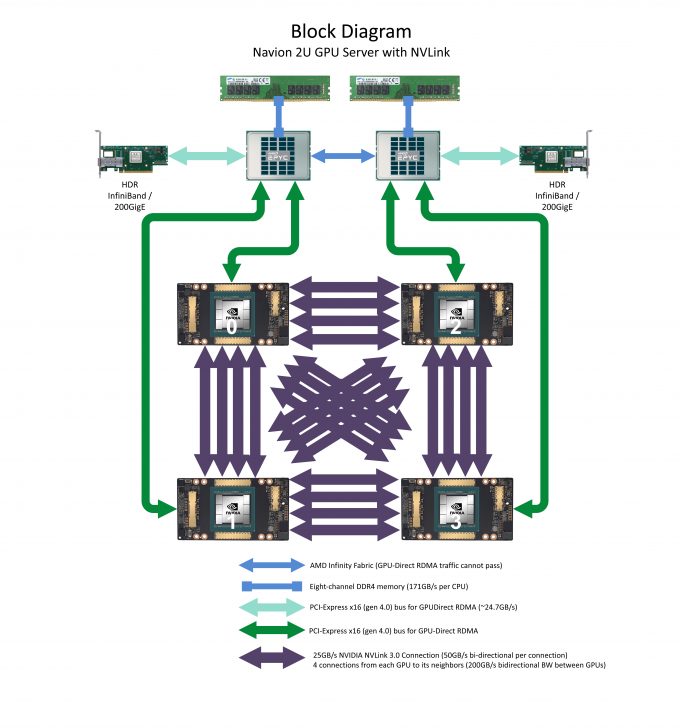

Navion 2U GPU Server with NVLink is a balanced platform of leadership-class NVIDIA A100 Tensor Core GPUs and AMD EPYC™ CPUs.

Each NVIDIA A100 GPU is connected with 200GB/s of bidirectional bandwidth to each of its neighbors for applications where high-bandwidth GPU:GPU communication is critical.

The system also supports PCI-E Gen4: offering up to 2X the data rate from host CPU to PCI-E Gen 4 capable I/O devices vs the previous generation. This includes NVIDIA A100 GPUs and Mellanox® HDR 200Gb InfiniBand.

Features

4 NVIDIA A100 GPUs with: 40GB HBM2 or 80GB HBM2e memory, 3rd Gen NVIDIA NVLink® Technology, and next generation Tensor Cores supporting TF32 instructions

4 NVIDIA A100 GPUs with: 40GB HBM2 or 80GB HBM2e memory, 3rd Gen NVIDIA NVLink® Technology, and next generation Tensor Cores supporting TF32 instructions- 200GB/sec of GPU:GPU Bandwidth between any two GPUs (mesh architecture)

- Leadership-Class AMD EPYC CPUs, with a total of up to 128 processor cores

- Supports GPUDirect® RDMA over PCI-E x16 4.0 to Mellanox 200Gb HDR InfiniBand adapters

- NVIDIA CUDA® SDK installed and configured-Ready to run CUDA jobs!

- Reliable! Customers commonly use Microway servers for 5+ years.

Specifications

- (2) Processor Socket with AMD EPYC CPUs (clock speed: up to 3.7 GHz)

- Eight-Channel DDR4 3200 Mhz ECC/Registered Memory (32 slots), up to 8 TB total memory

- Four PCI-Express x16 4.0 low profile slot + one PCI-Express x8 4.0 slots

- 4 drive bays for hard drives and SSDs, all NVMe capable

- Removable Storage:

- Rear USB 3.0 ports

- Front accessible hot-swap hard drives

- Two integrated RJ45 10 Gigabit ports

- IPMI 2.0 with Dedicated LAN Support

- 2200W 2+0 high-efficiency power supplies

Accessories/Options

- 25/50/100G Ethernet or ConnectX®-6 200Gb HDR / ConnectX-5 100Gb EDR InfiniBand

- UPS power backup and surge suppression

Support

Supported for Life

Our technicians and sales staff consistently ensure that your entire experience with Microway is handled promptly, creatively, and professionally.

Telephone support is available for the lifetime of your server(s) by Microway’s experienced technicians. After the initial warranty period, hardware warranties are offered on an annual basis. Out-of-warranty repairs are available on a time & materials basis.

Price

System Price: $60,000+

Each Microway system is customized to your requirements. Final pricing depends upon configuration and any applicable educational or government discounts.

Call a Microway Sales Engineer for Assistance : 508.746.7341 or

Click Here to Request More Information.